Root Causes of the Pharmaceutical R&D Productivity Crisis

Why are success rates in pharmaceutical R&D so low? And unlike many other industries where technology has accelerated R&D, why has the productivity trend in pharmaceutical R&D been worsening over the past few decades? As the reasons are not obvious, I thought it would be illuminating to many of my blog readers if I summarized the key findings of the most influential studies and papers investigating the root causes of the pharmaceutical R&D productivity crisis. Full references to all the papers mentioned are provided at the end of this article.

The Unrelenting Decline in Pharmaceutical R&D Productivity

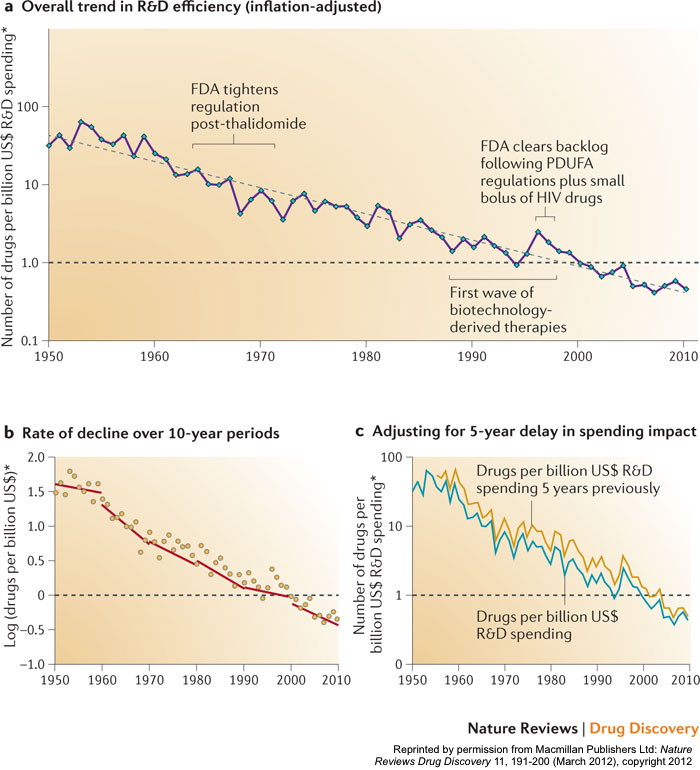

Aggregated at the level of the entire industry, pharmaceutical R&D productivity has been declining for the past six decades. A recent study (Scannell et al. 2012) estimated that:

“The number of new drugs approved per billion US dollars spent on R&D has halved roughly every 9 years since 1950, falling around 80‐fold in inflation‐adjusted terms”.

That same study even coined the phrase “Eroom’s Law” (see the chart above) to describe this phenomenon – so named as the reverse of the well-known “Moore’s Law” that describes the exponential increase in computing power over time in the information technology industry.

This steady decline is even more dramatic when one considers the huge advances that have been made in the scientific understanding of disease and in certain pharmaceutical R&D-enabling technologies (combinatorial chemistry, high-throughput compound screening, genomic sequencing and three-dimensional protein structure mapping to name a few), the equivalent of which in other industries would have greatly enhanced R&D productivity.

Downsides of Scale and Industrialization

By the end of the last decade, some astute observers had noted that consolidation in the pharmaceutical industry and the concomitant scaling up and industrialization of its R&D infrastructure had created diseconomies of scale, severely reducing the innovative culture of the R&D organizations and the creative risk-taking of their scientists. The then CEO of one of the largest big pharmas (GSK) published an article (Garnier 2008) arguing that organizational scale and complexity “can cause passionate engagement and courageous risk taking to give way to risk aversion, promises with no obligation to deliver, and bureaucratic inertia”. Furthermore, he propounded that the leaders of major pharmaceutical corporations had “incorrectly assumed that R&D was scalable, could be industrialized, and could be driven by detailed metrics (scorecards) and automation. The grand result: a loss of personal accountability, transparency, and the passion of scientists in discovery and development”.

A major quantitative study published soon afterwards by an experienced Eli Lilly executive (Munos 2009) reported a startling insight: the average annual output of approved new molecular entities (“NMEs”) per major pharmaceutical company had remained pretty much constant for nearly 60 years, irrespective of the company’s size or level of R&D investment. This fact explained why, with the industry consolidating into a smaller number of very large companies, the total number of approved NMEs being generated by the large companies was falling. To quote from the Munos paper, “Since the early 1980s [until 2007], the share of NMEs discovered by large pharmaceutical companies declined from 75% to 35%”, with the slack being taken up by small companies and academic research institutes.

A key success factor for the smaller companies and academia cited by Munos was diversity:

“By virtue of their number, small firms collectively can explore far more directions, and investigate areas that their larger, more conservative competitors avoid. However, only a small fraction of these small companies will be rewarded with an FDA approval. So individually, [they are] a much less reliable source of NMEs than large companies, but collectively, they produce more, for less”.

Munos goes on further to argue: “The industry must rethink its process culture. Success in the pharmaceutical industry depends on the random occurrence of a few ‘black swan’ products. Common processes that are standard practice in most companies create little value in an industry dominated by blockbusters. These include developing sales forecasts for new products, which are inaccurate nearly 80% of the time. Another example is portfolio management, which has been widely adopted by the industry as a risk management tool, but has failed to protect it from patent cliffs. During the past couple of decades, there has been a methodical attempt to codify every facet of the drug business into sophisticated processes, in an effort to reduce the variances and increase the predictability. This has produced a false sense of control over all aspects of the pharmaceutical enterprise, including innovation”.

Unrelenting Pressures from Health Economics

Pammolli and his colleagues at the IMT Institute conducted a comprehensive statistical analysis on a database of R&D projects for more than 28,000 potential medicinal compounds investigated since 1990 (as reported in Pammolli et al. 2011). They determined that the average number of approved NMEs generated per year by R&D projects started between 2000–2004 was less than half the equivalent statistic for R&D projects started between 1990 and 1999. Their analysis of productivity (as measured in terms of attrition rates, development times and the number of NMEs launched) concluded that its sharp decline was strongly correlated with:

“An increasing concentration of R&D investments in areas in which the risk of failure is high, which correspond to unmet therapeutic needs and unexploited biological mechanisms”.

In other words, companies were deliberately choosing to invest in areas with inherently lower probabilities of success since these “translate into lower expected number of competitors and, therefore, into weaker and slower competition and higher expected prices and revenues”. As the paper notes, “Both private and public [healthcare] payers discourage incremental innovation and investments in follow-on drugs in already established therapeutic classes, mostly by the use of reference pricing schemes and bids designed to maximize the intensity of price competition among different molecules. Indeed, in established markets, innovative patented drugs are often reimbursed at the same level as older drugs”.

Having coined “Eroom’s Law”, Scannell and his colleagues go on in their paper (Scannell et al. 2012) to describe four primary factors driving the productivity decline. The first of these they entertainingly refer to as the “better than the Beatles” problem. In their words, “Imagine how hard it would be to achieve commercial success with new pop songs if any new song had to be better than the Beatles, if the entire Beatles catalogue was available for free, and if people did not get bored with old Beatles records”.

“Yesterday’s blockbuster is today’s generic. An ever-improving back catalogue of approved medicines increases the complexity of the development process for new drugs”.

This phenomenon is essentially the driver identified by the Pammolli paper i.e. companies invest in scientifically tougher areas because they cannot achieve an acceptable return if successful in areas where there are already sufficiently effective and safe drugs at very low generic prices. Healthcare payers do not switch to new products for a small incremental benefit because the cost of the existing alternatives is so much lower. Furthermore, health economics is unusual in that if one is successful at delivering healthcare, its overall costs then increase even more going forward – as people live longer, a growing retired population need their healthcare provided for over a longer lifetime without a corresponding increase in the working population to pay for it. Healthcare payers worldwide are thus very focused on cost effectiveness since their budgets are under enormous pressure. Companies in turn increasingly need to turn to new bioscience technologies and innovative treatment ideas in an attempt to create new therapeutics that significantly raise the bar.

An Aside: As the Scannell paper notes, the above phenomenon is different to the often-quoted “low hanging scientific fruit” problem. The latter suggests that productivity has declined because all the “easy” drugs have been made. This argument might be true to some extent but nevertheless confuses “undiscovered” with “difficult”. Many of the discoveries that led to breakthrough therapies historically owed a lot to serendipity-induced leaps of logic; they would have been very difficult to derive via incremental thinking. i.e. some of the fruit fell from high up the tree!

Regulator and Management Behaviors

The second primary factor described by the Scannell paper is what they term the “cautious regulator” problem. New technologies and innovative treatment ideas translate to many unknowns and new risks. Public distrust of the pharmaceutical industry and conservatism by regulators has led to the imposition of increasingly higher hurdles for efficacy, safety, and quality, as articulated in the aforementioned paper:

“Each real or perceived sin by the industry, or genuine drug misfortune, leads to a tightening of the regulatory ratchet, and the ratchet is rarely loosened, even if it seems as though this could be achieved without causing significant risk to drug safety”.

The final two primary factors highlighted in the Scannell paper (the “throw money at it tendency” and the “basic research–brute force bias”) are both closely related to the behavioral tendencies of top management in large publicly listed companies. The latter are expected to deliver predictable revenue and profit streams in return for specific costs expended, such cashflows scrutinized and modeled extensively by independent investment analysts. This expectation leads (consciously or otherwise) to regarding individual projects and the overall R&D organization as “machines” that can be “engineered” to convert certain specified inputs into predictable outputs, with productivity enhanced through the control of a small number of key management “levers”. I refer to this logic as “management reductionism”, the adoption of which also leads further to the belief that once you have engineered the machine, its outputs can be increased through corresponding increases in the relevant inputs – to get more out, you simply throw more money at it!

What further reinforced the management reductionism mindset during the 1990s was the increasing popularity of “molecular reductionism” (as described for example in Van Regenmortel 2004) which essentially argued that the optimum route to treating a particular disease is based on finding and correctly modulating an appropriate single biological target. The combination of management reductionism and molecular reductionism was temptingly attractive to the leaders of the large pharmaceutical companies, as well as their management consultants and bankers. The large pharmaceutical companies invested huge sums in building industrialized “R&D machines” (see Thong 2013 for a more detailed articulation of this phenomenon) that aimed, with a brute force mentality, to systematically (i) identify the right biological targets for the diseases they were prioritizing, and (ii) generate drug compounds for addressing these targets via a scalable industrial process.

As we can now see in hindsight, these R&D machines actually had the opposite effect. In truth, the human body is hugely more complex than molecular reductionism would suggest, and our base of knowledge in human biology lags far behind many other fields such as information technology or aerospace. In most cases, a biological target that looked promising in the test tube did not sufficiently affect real life disease when modulated with a custom-designed drug in a clinical setting, or worse still, led to unacceptable side effects – owing to the complexity of the targeted biological pathway, as well as to effects on other biological pathways impacted in the process. Also, what was hitherto regarded as a single disease was actually a group of different diseases that had similar symptoms but quite different biological targets as their root causes – when a candidate drug modulating one specific target was tested on the whole disease population (as traditionally defined), the aggregate results were inconclusive. Furthermore, most biological targets play a potential role in several seemingly disparate diseases – where they would have most scientific and medical impact often did not align with the commercial priorities of the companies, leading to wasted efforts on the less viable applications. In addition, the complexity of the human body makes it very difficult to create drug molecules that did not have unacceptable side effects or were practical from the perspective of drug absorption, distribution, metabolism and excretion. Last but not least, all the aforementioned complexities led to highly unpredictable and complex R&D projects which were not amenable to being run with a process based on management reductionism. To make matters worse, as mentioned earlier, the industrialization of R&D also served to negatively impact creativity, innovation and decision-making by the R&D scientists and their managers.

Summary and Concluding Remarks

In summary, consolidating the arguments outlined above, there were four root causes of the R&D productivity crisis in pharmaceuticals:

- Relentless Health Economics Pressures: The ever-growing back catalog of cheap generic drugs continuously raises the bar for new medicines. It incentivizes companies to prioritize tougher medical challenges with inherently lower probabilities of success. And the continuing increases in lifespan and quality of life expectations brought about by improving healthcare serves to put even more pressure on the budgets of healthcare payers, magnifying the height of the mountain that new drugs need to climb over the old generic ones.

- Increasing Regulatory Hurdles: Health economics pressures push pharmaceutical R&D to experiment with new bioscience technologies and innovative treatment approaches. But this in turn increases public concerns about safety and encourages regulators to increase their hurdles in terms of the data requirements, statistical sample size and patient group specificity needed for marketing authorization.

- Immature State of Knowledge and Molecular Reductionism: Our global state of knowledge in human biology, especially as regards the complex inter-relationships that constitute biological pathways, is still far behind other scientific and technical areas. Historical pursuit of the molecular reductionism hypothesis led the industry to overly-simplistic approaches and it is now playing catch-up.

- Management Reductionism and Diseconomies of Scale: An overly-simplistic management paradigm led to R&D consolidation and industrialization, which in turn engendered a lack of creative diversity, a paucity of innovations and poor management decision making. Again, the industry is now playing catch-up to address these shortcomings.

On the positive front, the large pharmaceutical companies have aggressively embraced R&D collaborations as a means to increase diversity and tap into the more creative and innovative cultures in small companies and academia. The industry has also recognized the scientific complexities outlined earlier, becoming more wary of simplistic molecular reductionism. For example a recent paper by a group of AstraZeneca managers and scientists (Cook et al. 2014) identified five technical determinants of success in drug R&D projects (“right target”, “right tissue”, “right patient”, “right safety” and “right commercial potential”) which directly focus on these scientific challenges.

To integrate the above five technical aspects and enable an overall improvement in productivity will also require a shift away from historical management reductionism. As mentioned in the Cook paper, a change in organizational culture and incentives is needed, away from “a volume-based progression mentality to truth-seeking one focused on project quality and depth of scientific understanding”. Furthermore, I believe that smarter and more sophisticated project management and governance approaches are needed – the journey for most drug R&D projects is not linear and cannot be mapped out precisely in advance. Instead the journey comprises many twists and turns, filled with unexpected challenges and opportunities, including multiple ways to generate value which were not anticipated at the outset.

Whether the industry productivity decline can be ultimately halted or reversed only time will tell!

References

- Cook, D., Brown, D., Alexander, R., March, R., Morgan, P., Satterthwaite, G. and Pangalos, M.N. 2014. Lessons learned from the fate of AstraZeneca’s drug pipeline. Nature Reviews Drug Discovery, 13: 419–431, published online May 16, 2014. http://www.nature.com/nrd/journal/v13/n6/full/nrd4309.html

- Garnier, J-P. 2008. Rebuilding the R&D Engine in Big Pharma. Harvard Business Review May 2008.

- Munos, B. 2009. Lessons from 60 years of pharmaceutical innovation. Nature Reviews Drug Discovery Volume 8 December 2009.

- Pammolli, F., Magazzini, L. and Riccaboni, M. 2011. The productivity crisis in pharmaceutical R&D. Nature Reviews Drug Discovery Volume 10 June 2011.

- Thong, R. 2013. Transforming Biomedical R&D: Reflections on Two Decades of R&D Improvement Initiatives. SciTechStrategy April 17, 2013. https://scitechstrategy.com/2013/04/two-decades-of-biomedical-rd-improvement-initiatives/

- Scannell, J.W., Blanckley, A., Boldon, H. and Warrington, B. 2012. Diagnosing the decline in pharmaceutical R&D efficiency. Nature Reviews Drug Discovery Volume 11 March 2012.

- Van Regenmortel, M.H.V., 2004. Reductionism and complexity in molecular biology. European Molecular Biology Organization EMBO Reports Volume 5 Issue 11 November 2004.